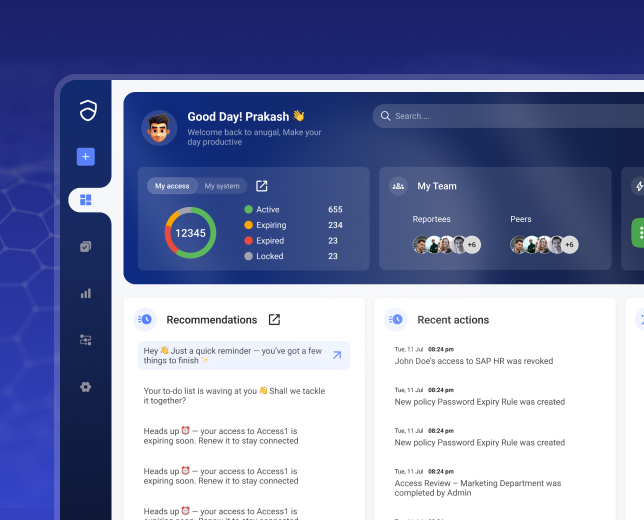

AI-Based Access Recommendations

Reduce access review fatigue without lowering decision quality

Anugal supports access decisions with explainable, context-driven recommendations so reviewers act on evidence.

Turn Access Certifications into Informed, Defensible Decisions

Access certifications fail not due to lack of review. but due to lack of context. Managers and application owners are asked to validate large volumes of access without clarity on what it enables, whether it is used, how it compares to peers, or whether it introduces risk.Without insight, certifications become routine approvals. Fatigue increases, and risk signals are missed.

Anugal’s AI-based access recommendations enrich each review with usage data, peer comparison, and policy risk indicators at decision time. Low-risk access is easier to validate, while high-risk or unusual access is clearly flagged. AI guides the decision, but final authority always remains with humans.

The Problem with Traditional Access Reviews

- Lack of Context Entitlement names are technical, abstract, and disconnected from business impact.

- Equal Treatment of Unequal Risk High-risk and low-risk access receive the same review effort.

- Poor Audit Defensibility Approvals show who approved but not why the decision was reasonable.

- Reviewer Fatigue Large review campaigns overwhelm managers, leading to rubber-stamp approvals.

How Anugal Uses AI, Responsibly

Anugal does not use AI to approve or revoke access. AI is used to Analyze, Prioritize, and Recommend, while humans decide.

AI operates within:

- Defined governance and eligibility rules

- Approved role models and access structures

- Risk scoring and policy thresholds

- Continuous audit readiness

- Cross-system orchestration

- Human authorization boundaries and workflow controls

Intelligent Certification Decision Framework

Risk-Aware Prioritization

High-impact access (privileged, financial, SoD-sensitive) is surfaced first.

Usage-Informed Recommendations

Unused, rarely used, or dormant access is highlighted during reviews.

Peer-Based Access Analysis

AI compares access against users in similar roles, departments, and locations to identify outliers.

Decision Context Preservation

Recommendations are recorded alongside reviewer decisions for audit evidence.

How AI-AssistedI Certifications Work

Access Data Is Assembled

-

Anugal collects:

- Current entitlements

- Role memberships

- Application access

- Usage signals

- Risk indicators

This forms the review baseline.

AI Analyzes Context

-

The AI layer evaluates:

- Peer access patterns

- Role expectations

- Historical approvals and removals

- Policy and SoD relevance

- Dormancy and over-provisioning signals

Raw access lists become meaningful insight.

Recommendations Are Generated

-

For each access item, AI suggests:

- Retain (aligned with role and peers)

- Remove (unused or anomalous)

- Review carefully (risk-sensitive access)

Each recommendation includes a short explanation.

Human Reviewers Decide

-

Managers and owners:

- See fewer, better-prioritized decisions

- Understand why access should stay or go

- Retain full authority to override recommendations

No decision is taken without human confirmation.

Decisions Become Audit Evidence

- Anugal captures:

- Recommendation context

- Reviewer decision

- Rationale (implicit or explicit)

- Execution outcome

Certifications become defensible control evidence, not just approvals.

Where AI-Based Recommendations are Used

User Access Certifications

Privileged access reviews

Dormant and excess access cleanup

Role optimization inputs

Exception and mitigation follow-ups

What This Changes for

the Business

- Shorter certification cycles

- Higher review completion rates

- Reduced reviewer fatigue

- Fewer blanket approvals

- Stronger audit defensibility

- Cleaner access posture over time